In this post we will discuss the little known trick of setting an ArrayList’s initial capacity to improve the performance of your Java applications and optimizing the usage of the often used data structure. The good news is that it takes a minimal amount of work and can get you a performance boost of up to 12%.

The problem with ArrayLists

ArrayLists and lists in general are great data structures for storing data. They are convenient for when we don’t know how large our data set is. However, this comes with a performance penalty.

Take for example the situation where we would like to store incoming data into a list. Depending on the list implementation, every once in a while, additional memory has to be allocated in order to store the newly incoming data. This allocation process can be quite expensive and will hurt your application’s performance.

Setting the initial capacity to improve performance

In order to optimize the performance of ArrayLists, it is advisable to set a large enough initial capacity when initializing an ArrayList to incorporate all your data. This will allocate a large enough chunk of memory so that you will probably not need to perform the allocation process again.

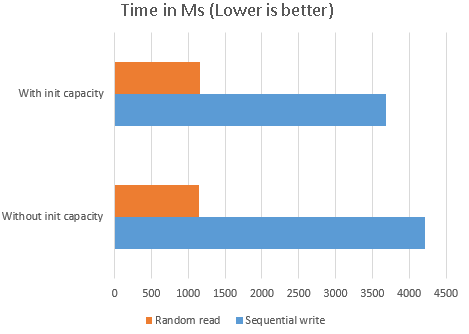

In the following test, we compared 10 million sequential write and random read operations for a list with and a list without an initial capacity set.

/**

* Copyright nullbeans.com 2020

*

* Use of the following code is permitted at your own risk.

* If you would like to host this code on your website, please go ahead, as long as this copyright notice is not removed from the code.

* Thank you :)

*

*/

package com.nullbeans.nullbeansserver;

import org.junit.Test;

import org.slf4j.Logger;

import org.slf4j.LoggerFactory;

import org.springframework.util.StopWatch;

import java.util.ArrayList;

import java.util.Random;

public class InitialCapacityTest {

private static final Logger logger = LoggerFactory.getLogger(InitialCapacityTest.class);

@Test

public void arrayVsArrayListInt(){

int sampleSize = 10000000;

Random random = new Random();

StopWatch listStopWatch = new StopWatch("ArrayList");

listStopWatch.start("Serial access write");

ArrayList<Integer> arrayList = new ArrayList<>();

for(int i = 0; i < sampleSize; i++){

arrayList.add(random.nextInt());

}

listStopWatch.stop();

listStopWatch.start("Random access read");

for(int i = 0; i < sampleSize; i++){

int someInt = arrayList.get(random.nextInt(sampleSize));

}

listStopWatch.stop();

StopWatch listStopWatchwithCap = new StopWatch("ArrayList with initial Capacity");

listStopWatchwithCap.start("Serial access write");

ArrayList<Integer> arrayListwithCap = new ArrayList<>(sampleSize);

for(int i = 0; i < sampleSize; i++){

arrayListwithCap.add(random.nextInt());

}

listStopWatchwithCap.stop();

listStopWatchwithCap.start("Random access read");

for(int i = 0; i < sampleSize; i++){

int someInt = arrayListwithCap.get(random.nextInt(sampleSize));

}

listStopWatchwithCap.stop();

logger.info(listStopWatch.prettyPrint());

logger.info(listStopWatchwithCap.prettyPrint());

}

}

The following results were obtained:

2018-11-25 12:48:26,214 INFO [main] com.nullbeans.nullbeansserver.InitialCapacityTest: StopWatch 'ArrayList': running time (millis) = 5359 ----------------------------------------- ms % Task name ----------------------------------------- 04208 079% Serial access write 01151 021% Random access read 2018-11-25 12:48:26,218 INFO [main] com.nullbeans.nullbeansserver.InitialCapacityTest: StopWatch 'ArrayList with initial Capacity': running time (millis) = 4851 ----------------------------------------- ms % Task name ----------------------------------------- 03689 076% Serial access write 01162 024% Random access read

Setting the initial capacity of the array list yielded 10 – 12% increase in sequential write operations. This is because Java did not have to put much effort allocating memory on the fly to accommodate for the new incoming data. Random access read operation performance was not affected.

Please also note that setting the initial capacity of the list does not mean that the size of the list is bound to that capacity figure. Your list can still grow beyond that if it needs to.

Conclusions

If system memory is not an issue for your application stack, then it might payoff to increase the initial capacity of your array lists. Or if you know the exact size of your data, then maybe an array would be the best option. 10-12% performance improvement in production environment sounds like a good deal, even at the cost of a little extra memory.